Mark Zuckerberg showed preview three viewers on which Reality Labs are working, a glimpse of the future of the metaverso. And in doing so, Meta’s CEO introduced an interesting concept: the “Turing visual test”. Which will serve as the ultimate proof when the metaverse is indistinguishable from the real world.

What the “Turing visual test” announced by Zuckerberg

As explained by the same Zuckerberg with Michael Abrash, Chief Scientist of Meta’s Reality Labs, on the CEO’s Facebook profile, perfect VR is based on four concepts. And Meta is working diligently in all these four areas.

First you need to have one ten-tenths vision, no need for extra eyeglasses. Additionally the device must have the capability of track eye movements e change focus in a natural way, in order to see distant and near objects. Thirdly, he must know correct distortion optics of current optics on the market. Finally, we need theHDR (High Dinamic Range) to show bright colors, shadows and depth.

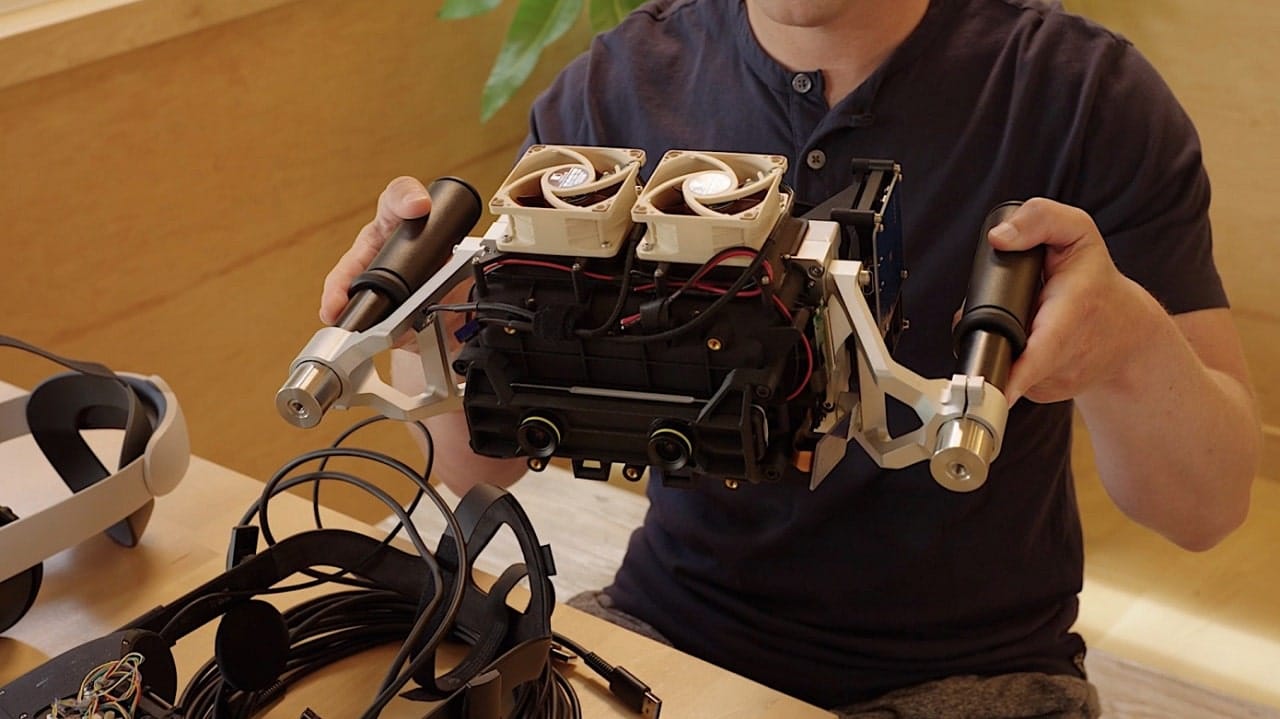

The company is therefore working on various prototypes, which explore these four different challenges. With the ultimate goal of combining all these features in one truly perfect viewer.

According to Zuckerberg: “Displays that match the full capabilities of human vision will unlock some really cool things. The first is a realistic sense of presence, and this is the feeling of being with someone or somewhere as if you were physically there. And given our focus on helping people connect, you can understand why it’s so important to us. ”

Only when the virtual reality in the viewers is indistinguishable from the one around us, will the viewer pass the “visual Turing test”. The reference is to the thought experiment of Alan Turing in the 1950s, in which he imagined an artificial intelligence that gave answers indistinguishable from human ones. This is the visual version: when the world of machines is impossible to distinguish from the human one.

Zuckerberg explained that the Project Cambria, the viewer they will launch later this year, is still a long way from this goal. But Meta is working on it. And she has already created several prototypes that come close.

Leave a Reply

View Comments