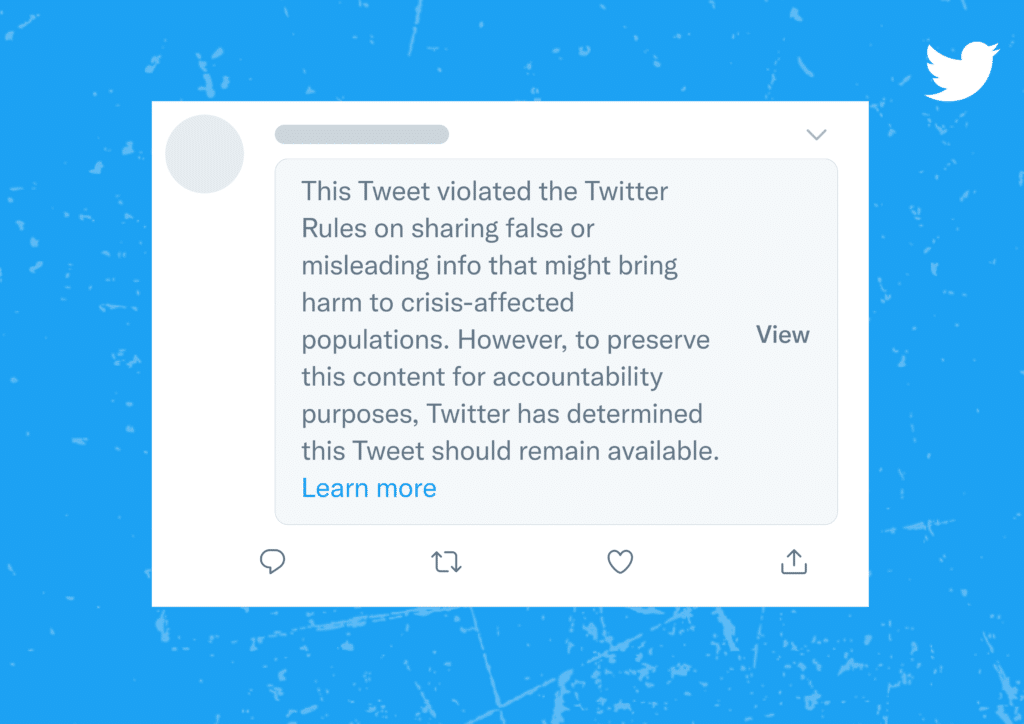

New policy ofApps on Twitter to face moments of crisis. In fact, the platform has reportedly set new standards for remove or block the promotion of those tweets that are seen as a vehicle for fake news. “Content moderation is more than leaving or removing content,” he explained Yoel Roth, responsible for the security and integrity of Twitter. “And we have broadened the range of actions we could take to ensure they are proportionate to the severity of the potential damage.” A new attitude, therefore, on the part of the platform towards fake news.

Twitter: App ready to stop disinformation in difficult times

The new Twitter App policy places particular control over the false reporting of events, on false allegations involving weapons or the use of force, and on the broader misinformation regarding atrocities or international response. In short, the goal is to fstop the spread of fake news, which always become highly viral in times of emergency. Due to the perceived danger – as in the case of Covid-19 – users are rushing to share unverified information. And the moderators of the platform fail to ensure safety on social networks given the huge content publication. A “dog chasing its own tail”, which creates quite a few problems for Twitter.

Credits: Twitter

Credits: Twitter

Yet now the platform seems to have finally found a solution. With the introduction of the new policy, tweets classified as disinformation will not necessarily be eliminated or banned. Rather, they will be marked with a warning label which will prompt users to click a button before viewing the tweet. And they will be blocked by algorithmic promotion. More rigorous standards, however, should be applied to very specific situations. As in the case of the invasion of Ukraine by Russia, for example. And in all future crisis situations. Indeed, to be clear, the Twitter App has defined emergencies as all those “situations in which there is a widespread threat to life, physical safety, health or basic subsistence”.

Leave a Reply

View Comments