After a first launch in the United States, Apple is now ready to expand the “Communication Safety” function also in Australia, Canada, New Zealand and the United Kingdom. What is it specifically about? Of an option designed for automatically obscure nude images sent to children via messages. Through a scan of the device, with iOS Apple guarantees the safety of the little ones. But let’s find out what it is about in more detail.

iOS: Apple implements the feature to protect children from nude pictures

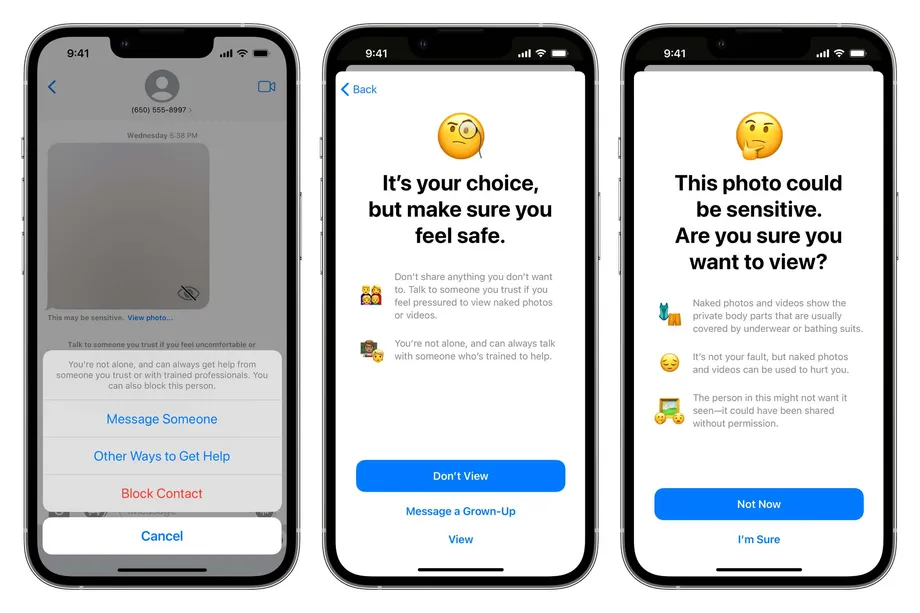

After a first test in the US, Apple iOS now introduces the feature that obscures nude images in messages in four other countries around the world as well. How the option works is incredibly simple: scan images messages in and out in search of “sexually explicit” material. In case this is found, the photo is blurred and iOS provides the user with a number of recommendations that it would be better not to view the image. “You are not alone and you can always get help from someone you trust or qualified professionals. You can also block this person “.

However, this does not seem to be the only function that Apple is working on for the safety of the little ones. The Cupertino company, in fact, is expanding the launch of a new feature for Spotlight, Siri and Safari searches that will direct users to safety resources in case they search for topics related to child sexual abuse. To these two functions Apple had added a third. In fact, in August it provided for the scanning of photos for child pornography material (CSAM) before they were uploaded to a user’s iCloud account. This feature, however, has sparked an intense reaction from privacy advocates, so much so as to jeopardize the release. But given that the first two options were released by Apple on iOS, we also expect the third one to arrive. When? We are not given to know.

Leave a Reply

View Comments